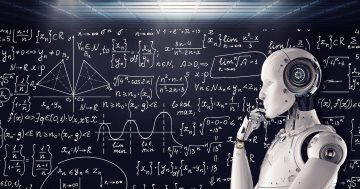

Will AI prepare students for the future or degrade their ability to think and write creatively? Image: File.

Before we begin, I want to point out that the following words are all my own. No artificial intelligence, no plagiarising.

It should be easy for regular readers to notice. If I had used AI, I’m sure the column would read much better, be more interesting and use much bigger words.

The use of AI at schools and universities is being debated around the world after it was discovered some students were using the latest technology to write their assignments. Somehow, some people think students using AI is a good thing and that banning it would be counterproductive.

Am I missing something? How can this technology be good for students? How can they possibly benefit from getting a computer to write their assignments for them?

In NSW, public schools have announced they will ban the use of AI, but private schools are indicating they will not. According to these private schools, they believe their teachers can identify work produced by AI, which is interesting, considering many global experts claim it is almost impossible to tell the difference.

Private schools also say using AI technology will lessen the workload of over-stretched teachers. Everyone knows finding enough teachers is a problem Australia-wide. But is embracing technology which takes away the need for students to use creative thinking of their own the best way to make life easier for teachers?

New York schools have banned ChatGPT, the AI chatbot most favoured by students. The education department said it had concerns about negative impacts on student learning and concerns regarding the safety and accuracy of content.

Proponents of AI technology say it prepares students for the real world and that this is the way of the future. Maybe, but do employers want employees who actually can’t write their own submissions, reports and analysis?

The technology is spreading like wildfire. The Australian version of Rolling Stone said it will use AI to write some of its articles. But don’t worry, it will clearly identify which articles have not been penned by a real person to save us the hassle of reading them.

At least that’s one subscription I can safely cancel.

And then some misguided Nick Cave fan sent the singer lyrics written by ChatGPT. The singer was not impressed, labelling the song “a grotesque mockery” and a “travesty”.

“This song sucks,” he declared.

I know some of you will declare me a Luddite, stuck in the dark ages and wishing people still walked in front of cars carrying lanterns. But I’ve already on this website expressed my concern about what social media and other new technology is having on younger generations.

And have you tried recently to get a teenager to read a book? Impossible.

So for me, ChatGPT and other forms of AI don’t need to be embraced. If this new technology means schools and universities have to return to the days of assignments being done in the classroom using pen and paper, so be it.

The pandemic has already put a generation of students behind the eight-ball. Let’s not add to it.